From Integration to Fragmentation: How a Radical Theory of Consciousness Explains Trauma

The question of consciousness—how a physical brain creates our rich, subjective inner world—is one of science’s deepest mysteries. For centuries, we’ve grappled with how fleeting thoughts, vivid emotions, and a stable sense of “self” can emerge from biological tissue.

But this question isn’t just philosophical. For millions living with the aftermath of trauma, the nature of consciousness is a daily, painful struggle. In conditions like Post-Traumatic Stress Disorder (PTSD), consciousness itself feels broken. It fragments, turning against itself with intrusive memories, flashbacks, and a persistent sense of disconnection from reality.

In his groundbreaking Integrated Information Theory (IIT), neuroscientist Giulio Tononi offers a powerful, mathematical framework that may connect these two worlds. He proposes that consciousness isn’t a mysterious property but a measurable quantity: the amount of information integration a system can perform.

This essay explores IIT not just as an abstract model, but as a critical lens for understanding trauma. By defining consciousness as integration, IIT provides a stunningly precise language for defining trauma as dis-integration—a shattering of the mind’s unity.

The Mathematics of Unity: What is Integrated Information (Φ)?

At the heart of IIT is a single, powerful measure: Phi (Φ).

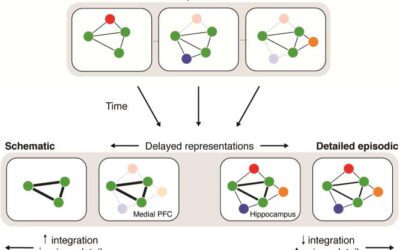

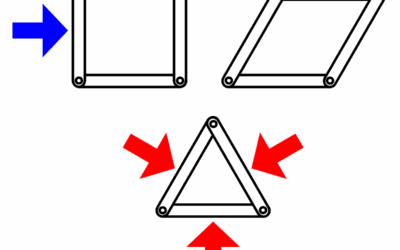

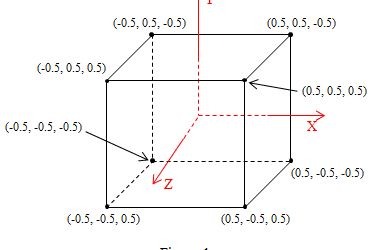

Phi (represented as $\Phi$) quantifies a system’s capacity to integrate information. Think of it as a measure of “wholeness.” A system has high $\Phi$ if its whole is truly greater than the sum of its parts. You cannot understand the system by “cutting it up” and looking at the pieces individually.

- High $\Phi$ (Integration): An orchestra playing a symphony. The music emerges from the complex, unified interaction of all the musicians. You can’t understand the symphony by listening to each instrument in isolation. The human brain in a wakeful state, binding sensory input, memory, and emotion into a single experience, is the ultimate example of a high-$\Phi$ system.

- Low $\Phi$ (Simple or Fragmented): A disconnected pile of orchestra instruments. Each part is simple and exists for itself. Or, crucially, a digital camera. While it takes in vast information (pixels), the sensor is just a collection of millions of independent light detectors. There’s no “whole” experience; each pixel is unaware of its neighbors.

According to Tononi, consciousness is this integrated information. It’s not all-or-nothing; it’s a continuum. A system with a high $\Phi$ is highly conscious. A system with a low $\Phi$ (like a brain in dreamless sleep or under anesthesia) has little or no consciousness.

This idea is built on a set of core axioms—self-evident truths about our own experience:

- Intrinsic Existence: Consciousness exists, for you.

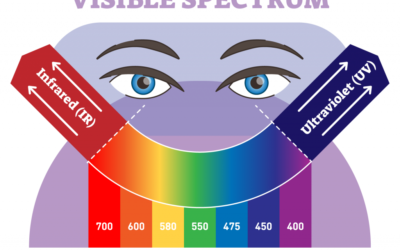

- Composition: It’s structured, with many distinct elements (e.g., colors, sounds, thoughts).

- Information: Each experience is specific; it’s this experience and not another.

- Integration: Consciousness is unified. You don’t experience the color “red” separately from the “shape” of an apple. You experience a single, unified “red apple.”

- Exclusion: Consciousness is definite. Your experience includes this thought and this feeling, and excludes all others at this moment.

The axiom of integration is the key. IIT proposes that the brain’s “main complex”—particularly in the posterior cortex—is a physical structure built to maximize $\Phi$, binding countless streams of information into the single, unified “you” that you experience every moment.

The Un-Integrated Mind: An IIT Framework for Trauma and PTSD

This is where the theory becomes a vital tool for mental health. If consciousness is integration, then trauma is a failure of integration.

When a person experiences an event that is overwhelmingly threatening, the brain’s normal information-processing systems shut down. The experience is not “digested” and woven into the person’s life story. Instead, it’s stored in a “raw,” fragmented state.

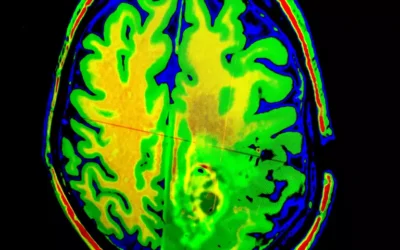

From an IIT perspective, trauma shatters the brain’s $\Phi$.

Dissociation as a “Split” in Consciousness

The hallmark of trauma is dissociation. This is the mind’s emergency defense, a way of “splitting off” from an unbearable experience. Sufferers describe it as “spacing out,” feeling unreal, or watching themselves from outside their own bodies.

IIT provides a precise model for this:

- The brain’s “main complex” (the unified “self”) fails.

- The system partitions into sub-systems that no longer integrate information with each other.

- The traumatic memory—with its terror, sensations, and beliefs—becomes a high-information “island,” completely disconnected from the main complex.

- This “splintering” of the mind is, by definition, a catastrophic drop in the system’s overall $\Phi$. The unified “self” literally disintegrates into warring, disconnected parts.

Flashbacks as Un-Integrated Information

This framework also explains the terrifying nature of flashbacks and intrusive memories. These are not “memories” in the normal sense. A normal memory is integrated: you know it’s in the past and you are here, now, safe.

A traumatic intrusion is an un-integrated piece of information. Because it was never woven into the main conscious complex, it doesn’t have a “time stamp.” When triggered, it doesn’t feel like a memory. It feels like it is happening right now, with all its original sensory and emotional force. This fragment of information hijacks the main complex, but it never truly joins it.

PTSD, in this light, is a chronic state of low and unstable $\Phi$. The brain struggles, and fails, to maintain a unified, integrated conscious field, constantly threatened by “islands” of un-integrated traumatic information.

Healing as Re-Integration: Trauma Therapy Through an IIT Lens

If trauma is dis-integration, then healing is re-integration.

This perspective reframes the goal of trauma therapy. The aim is not to “get rid of” the memory, but to build a conscious system strong enough to integrate it.

- Therapeutic Alliance: Creating a safe relationship with a therapist is the first step. This “co-regulates” the survivor’s nervous system, calming the brain enough to allow integration to even begin.

- Processing Memories: Modalities like EMDR (Eye Movement Desensitization and Reprocessing) or Cognitive Processing Therapy (CPT) are structured ways to re-activate the traumatic memory in a safe context.

- Connecting the Fragments: The goal is to connect this “island” of memory to the main complex. This involves linking it to new information: “I am safe now,” “It is in the past,” “I survived.”

- Increasing $\Phi$: As the memory is processed, it ceases to be an un-integrated fragment. It becomes just one part of the person’s larger, more complex life story. It becomes a memory, not a re-experiencing.

In Tononi’s terms, successful therapy literally increases the brain’s $\Phi$. It repairs the fragmented system, rebuilding a larger, more robust, and more unified conscious “self” that can hold the past without being shattered by it.

Broader Implications: From Sleep to Machine Minds

This powerful model of integration vs. fragmentation extends far beyond trauma.

- Sleep and Anesthesia: IIT explains why we “disappear” in dreamless sleep or under general anesthesia. It’s not that the brain “turns off”—it’s still highly active. But its activity becomes de-integrated. Different brain regions stop communicating in complex ways, $\Phi$ plummets, and the unified “self” dissolves.

- The Cerebellum: This also explains why the cerebellum, despite containing more neurons than the rest of the brain, contributes little to consciousness. Its crystal-like, parallel structure is inefficient at integrating information, resulting in a very low $\Phi$.

- Machine Consciousness: IIT offers a provocative, and sobering, take on artificial intelligence. It suggests that consciousness is not about behavior or intelligence. A “smart” AI like a chatbot, which simply mimics human language, has almost zero $\Phi$. Its processing is sequential and “feed-forward,” not deeply integrated. To build a truly conscious machine, Tononi argues, we would need to design an architecture that is, like the brain, built to maximize internal information integration—a system whose “whole” is vastly greater than its parts.

Criticisms and the Path Forward

IIT is not without its critics. The primary challenge is practical: calculating $\Phi$ for any complex system, like a human brain, is currently computationally impossible. Others argue that it doesn’t fully solve the “hard problem”—why any of this physical integration should feel like anything at all.

Despite these challenges, Integrated Information Theory offers a revolutionary shift in perspective. It moves consciousness from a vague philosophical mystery to a tangible, measurable property of the physical world.

By defining consciousness as integration, IIT gives us more than just a theory of mind. It gives us a profound, compassionate, and actionable framework for understanding its breakdown. It re-frames the suffering of trauma not as a personal failing, but as a quantifiable, physical disruption of the very processes that make us whole. And it lights the path forward, showing that healing is the brave and difficult work of re-integration—of slowly, piece by piece, building a more unified self.

References

- Balduzzi, D., & Tononi, G. (2008). Integrated information in discrete dynamical systems: Motivation and theoretical framework. PLoS Computational Biology, 4(6), e1000091.

- Koch, C. (2019). The feeling of life itself: Why consciousness is widespread but can’t be computed. MIT Press.

- Oizumi, M., Albantakis, L., & Tononi, G. (2014). From the phenomenology to the mechanisms of consciousness: Integrated Information Theory 3.0. PLoS Computational Biology, 10(5), e1003588.

- Tononi, G. (2004). An information integration theory of consciousness. BMC Neuroscience, 5(1), 42.

- Tononi, G., Boly, M., Massimini, M., & Koch, C. (2016). Integrated information theory: From consciousness to its physical substrate. Nature Reviews Neuroscience, 17(7), 450-461.

0 Comments