In 2014, a researcher in India thought the word “Hola.” Five thousand miles away, in France, another person perceived a flash of light in their peripheral vision. Then another. Then nothing. Then another flash.

The pattern meant something. The receiver decoded it.

The word had traveled from one brain to another without either person speaking, typing, or moving. No sound. No screen. No physical contact. Just two skulls, some electrodes, and the internet.

This is not science fiction. This is peer-reviewed neuroscience, published a decade ago. And the technology has advanced dramatically since then.

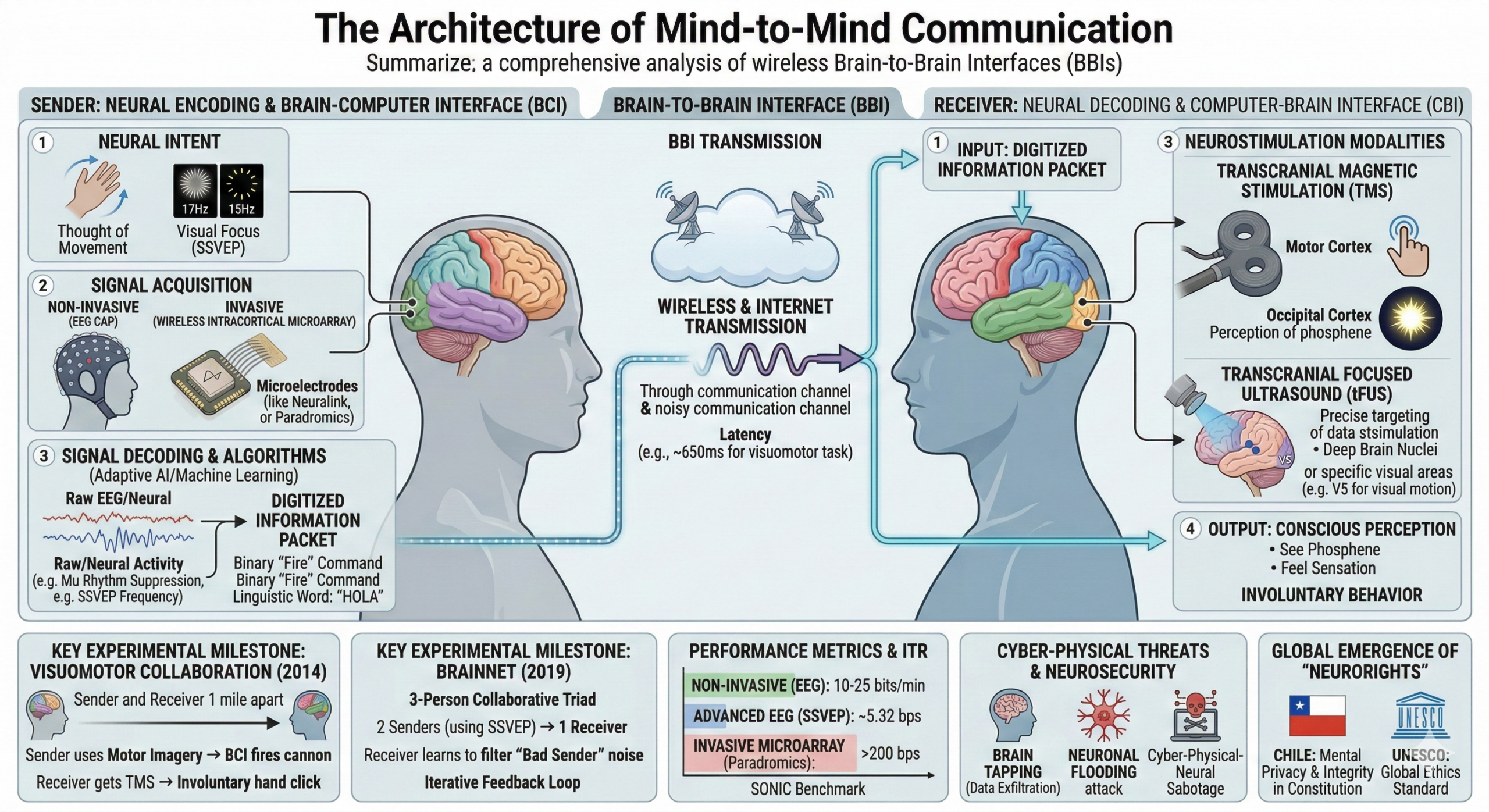

How It Works

A brain-to-brain interface (BBI) connects two systems that already exist.

First, a brain-computer interface (BCI) reads electrical activity from a “sender’s” brain using EEG electrodes or, increasingly, implanted microarrays. Machine learning algorithms decode that activity into digital signals representing intentions, commands, or concepts.

Second, a computer-brain interface (CBI) delivers information to a “receiver’s” brain using transcranial magnetic stimulation (TMS) or focused ultrasound. These technologies can induce perceptions (like flashes of light), motor responses (like involuntary hand movements), or even shifts in attention and cognitive processing.

Connect them over the internet, and you have telepathy. Crude telepathy, but telepathy nonetheless.

From Rats to Humans to Networks

The first successful BBI experiments used rats. In 2013, researchers at Duke University connected the motor cortices of two rats separated by thousands of miles. One rat in Brazil performed a task. Its neural activity was transmitted to a rat in North Carolina, which successfully used that information to complete the same task.

The rats had never met. They shared nothing but a data connection. Yet one brain was functioning as an extension of the other.

Human trials followed immediately. At the University of Washington, researchers created a two-person interface for playing a simple video game. The sender watched the screen and thought about moving their hand. The receiver, sitting in a different building with no view of the game, felt their hand jerk involuntarily and click a button.

They successfully defended a virtual city from rockets using nothing but brain-to-brain communication.

The delay from thought to action was about 650 milliseconds. Roughly the time it takes to blink.

BrainNet: When Three Minds Become One

By 2019, researchers had scaled up to three-person networks. BrainNet connected two “senders” and one “receiver” in a collaborative Tetris-like game. The senders could see the whole screen. The receiver could only see part of it and had to rely entirely on neural transmissions from the senders to decide whether to rotate a falling block.

Here’s where it gets fascinating.

The researchers deliberately made one sender unreliable by injecting noise into their signal. Over multiple rounds, the receiver’s brain learned to ignore the unreliable sender and trust the accurate one.

This happened without any verbal communication. Without any conscious awareness of who was trustworthy. The receiver’s brain simply weighted the incoming neural data differently based on past accuracy.

The accuracy rate across all trials was 81.25%. Three strangers, connected only by electrodes and wifi, collaborating as a single cognitive system.

The Hardware Revolution

Early experiments used bulky EEG caps and massive TMS machines that required subjects to sit perfectly still in laboratory settings. That’s changing fast.

In 2021, the BrainGate consortium at Brown University demonstrated the first wireless intracortical transmitter for human use. Paralyzed patients controlled tablet computers with their thoughts, achieving speeds identical to wired systems. Battery life: six hours. Charge time: two hours. Power consumption: 100 milliwatts.

By 2024, Paradromics had implanted a device smaller than a dime containing 421 microelectrodes, each thinner than a human hair, directly into human cortical tissue.

Neuralink’s “Telepathy” implant entered human trials, with patients controlling computers, playing video games, and operating robotic arms using pure neural intent.

Researchers at Columbia University developed a chip so thin and flexible it slides into the subdural space “like a piece of wet tissue paper.”

The transmission side is advancing too. Transcranial focused ultrasound (tFUS) can now target deep brain structures with millimeter precision, delivering information without surgery and without the bulk of magnetic stimulation systems.

We’re not far from BBIs that work outside the laboratory.

What Gets Transmitted

Current systems are limited. They transmit binary commands (yes/no, fire/don’t fire), simple motor intentions, and basic perceptual signals like phosphenes (flashes of light). The information transfer rate of the best non-invasive systems maxes out around 5 bits per second. Enough for a slow conversation. Not enough for streaming consciousness.

But intracortical systems are pushing past 200 bits per second. That’s 40 times faster than surface EEG. Fast enough to capture the simultaneous firing of thousands of individual neurons.

The bottleneck isn’t physics. It’s decoding. The challenge is building algorithms sophisticated enough to translate the chaotic, noisy, constantly shifting activity of a biological brain into structured digital signals, and vice versa.

That challenge is being solved by the same AI revolution transforming everything else.

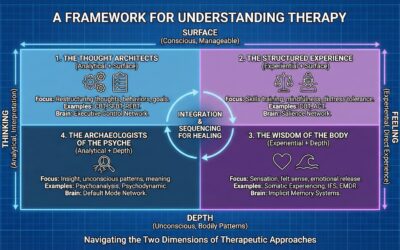

What This Means for Therapy

Some implications are straightforward. Locked-in patients could communicate directly. Stroke survivors could receive motor commands from a healthy therapist’s brain to guide rehabilitation. Couples therapy could involve actual transmission of emotional states rather than verbal approximations.

But the deeper implications are stranger.

What happens to the therapeutic relationship when a clinician can perceive a patient’s neural state directly? When a patient can feel that the therapist genuinely understands because the understanding is transmitted rather than inferred?

What happens to transference when the boundaries between minds become permeable?

What happens to parts work when we can potentially identify and communicate with specific neural subsystems rather than metaphorical internal family members?

What happens to the concept of individual identity when your brain learns to weight incoming signals from other minds the same way BrainNet receivers learned to trust reliable senders?

I don’t have answers. But these questions are coming whether we’re ready or not.

The Dark Side

Any technology that accesses the brain can be weaponized.

Researchers have already demonstrated side-channel attacks that extract 4-digit PIN codes from EEG data. If your thoughts can be read, your passwords can be stolen.

Security audits of commercial BCI devices have identified over 300 vulnerabilities in systems from major manufacturers. Brain data is the ultimate biometric. Unlike a password, you cannot change it if it’s compromised.

Theoretically, a hijacked CBI could deliver unauthorized stimulation. Researchers have simulated “neuronal flooding” attacks that overwhelm cognitive function and “neuronal scanning” attacks that slowly alter synaptic plasticity over time. The prospect of someone remotely inducing seizures, manipulating emotions, or subtly reshaping beliefs is no longer purely hypothetical.

And then there’s the question of consent. If I transmit a thought to you without your permission, is that assault? If your brain unconsciously incorporates my neural patterns into your decision-making, who made the decision?

The Legal Response

In 2021, Chile became the first country to enshrine “neurorights” into its constitution. The amendment treats brain data with the same legal protection as a physical organ. You cannot buy, sell, or traffic neural information without explicit consent.

In 2023, Chile’s Supreme Court ordered an American neurotechnology company to delete all brain data it had collected from a Chilean citizen, ruling the data was obtained without proper consent.

UNESCO has developed a global framework on neurotechnology ethics, slated for formal adoption in late 2025. The framework treats neural inferences as uniquely sensitive data requiring novel consent architectures.

We are watching a new category of human rights emerge in real time.

The Biological Reality

There’s one constraint that may limit how far this goes.

The brain is not a computer. It operates on biological noise and constant variability. The same thought never produces exactly the same electrical pattern twice. Background neural activity shifts with arousal, fatigue, emotion, and metabolism.

This means perfect fidelity in brain-to-brain communication may be biologically impossible. There will always be translation errors, interpretation gaps, and the irreducible uniqueness of individual neural architectures.

In a strange way, this is reassuring. The self may have a biological floor that technology cannot breach.

Or it may mean that BBIs will require not just better hardware but a fundamental reconceptualization of what “communication” means when the channel is biological tissue rather than air or wire.

Where We Are

Brain-to-brain interfaces are real. They work. They’re improving exponentially.

We can already transmit simple commands and perceptions between human minds using the internet. We can create multi-person neural networks that collaborate on tasks. We can induce trust and filter unreliable information sources purely through brain-to-brain feedback.

The hardware is miniaturizing. The algorithms are advancing. The commercial investment is massive.

Within a decade, we may see BBIs move from research laboratories to clinical applications. Within two decades, they may be as common as cochlear implants.

This will change what it means to be a separate self. It will change what therapy looks like. It will change how we understand co-regulation, empathy, and the boundaries between minds.

We should probably start thinking about it now.

Joel Blackstock, LICSW-S, is the Clinical Director of Taproot Therapy Collective in Birmingham, Alabama. He specializes in complex trauma treatment using qEEG brain mapping, Brainspotting, and somatic approaches.

0 Comments